John Forbes Nash and his wife Alicia  were tragically killed in a car crash on May 23, having just returned from a ceremony in Norway where John Nash received the prestigious Abel Prize in Mathematics (which, along with the Fields Medal, is the closest thing mathematics has to a Nobel Prize). Nash’s long struggle with mental illness, as well as his miraculous recovery, are depicted vividly in Sylvia Nasar’s book “A Beautiful Mind” and the Oscar-winning film which it inspired. In this post, I want to give a brief account of Nash’s work in game theory, for which he won the 1994 Nobel Prize in Economics. Before doing that, I should mention, however, that while this is undoubtedly Nash’s most influential work, he did many other things which from a purely mathematical point of view are much more technically difficult. Nash’s Abel Prize, for example (which he shared with Louis Nirenberg), was for his work in non-linear partial differential equations and its applications to geometric analysis, which most mathematicians consider to be Nash’s deepest contribution to mathematics. You can read about that work here.

were tragically killed in a car crash on May 23, having just returned from a ceremony in Norway where John Nash received the prestigious Abel Prize in Mathematics (which, along with the Fields Medal, is the closest thing mathematics has to a Nobel Prize). Nash’s long struggle with mental illness, as well as his miraculous recovery, are depicted vividly in Sylvia Nasar’s book “A Beautiful Mind” and the Oscar-winning film which it inspired. In this post, I want to give a brief account of Nash’s work in game theory, for which he won the 1994 Nobel Prize in Economics. Before doing that, I should mention, however, that while this is undoubtedly Nash’s most influential work, he did many other things which from a purely mathematical point of view are much more technically difficult. Nash’s Abel Prize, for example (which he shared with Louis Nirenberg), was for his work in non-linear partial differential equations and its applications to geometric analysis, which most mathematicians consider to be Nash’s deepest contribution to mathematics. You can read about that work here.

On, now, to game theory. We will focus, for simplicity, on the theory of non-cooperative matrix games between two players and

. We begin with the theory of zero-sum games, where any gain for

corresponds to an equal loss for

and vice-versa. Suppose

is an

matrix of real numbers. Define a mixed strategy for

to be a vector

with

for all

and

, and similarly define a mixed strategy for

to be a vector

with

for all

and

. We denote by

the simplex representing the set of all mixed strategies for player

(

). The expected payoff to

if

plays the mixed strategy

and

plays the mixed strategy

is

For each the mixed strategy

which is

in the

component and

elsewhere is called the

pure strategy for

, and similarly for

the mixed strategy

which is

in the

component and

elsewhere is called the

pure strategy for

. One usually thinks of a mixed strategy as a probability distribution on the space of pure strategies. The

entry of

is the expected payoff to player

if

plays his

pure strategy and

plays her

pure strategy. In general,

is the expected payoff to

if

plays his

pure strategy with probability

and

plays her

pure strategy with probability

.

Game theory began with the seminal 1928 paper of John von Neumann in which he proved the celebrated minimax theorem for zero-sum two-player games. Von Neumann defines a solution to the game to be a pair of mixed strategies (called optimal strategies), together with a real number

(called the value of the game), such that

for all

and

for all

. Intuitively, this means that:

can assure himself of an expected payoff of at least

, no matter which pure strategy

plays, by playing the mixed strategy

, and

can protect herself of an expected loss of more than

, no matter which pure strategy

plays, by playing the mixed strategy

.

The remarkable and fundamental theorem proved by von Neumann is that every zero-sum two-player matrix game as above has a solution. To illustrate this with an example, suppose Player 1 and Player 2 each independently choose either rock, paper, or scissors, and the loser (according to the usual rules) pays the winner a dollar. This can be summarized by the payoff matrix

It is easy to check that is the unique optimal mixed strategy for both players (with value 0). In other words, both players should play rock, paper, and scissors with equal probabilities, and if they do this then each player’s expected payoff is zero.

For a more complicated example, suppose Player 1 is given the Ace of Diamonds, Ace of Clubs, and Two of Diamonds while Player 2 is given the Ace of Diamonds, Ace of Clubs, and Two of Clubs. Each player chooses one of his cards; Player 1 wins if the suits match and Player 2 wins if they do not. The amount of the payoff is the numerical value of the card chosen by the winner, with one exception: if both players choose their Two then the payoff is zero. This can be summarized by the payoff matrix

Exercise: Show that the mixed strategies for Player 1 and

for Player 2 are optimal strategies with common value

.

An example of a non-zero sum game, to which von Neumann’s theorem does not apply but to which Nash’s theorem does, is the Prisoner’s Dilemma.

It is not difficult to show that von Neumann’s theorem is equivalent to the statement that for every zero-sum two-player matrix game, there exist mixed strategies such that

for all mixed strategies

. The theorem is also equivalent to the statement that for every zero-sum two-player matrix game, the quantities

exist and are equal. In this last form, the result has become known as von Neumann’s minimax theorem. The min-max and the max-min are both equal to the value

of the game, which in particular shows that this value is uniquely determined (though there might be more than one set of optimal strategies).

We now turn to Nash’s extension of von Neumann’s fundamental theorem in which one does not assume the zero-sum condition. (Nash’s theorem also applies to any number of players, which represents another improvement over von Neumann, but for simplicity of exposition we will stick to the two-player scenario.) Nash discovered this result — and the key concept now known as “Nash equilibrium” — as a graduate student at Princeton in the summer of 1949. Nash told von Neumann’s secretary that he wanted to discuss an idea which might be of interest to the distinguished professor. As Sylvia Nasar writes:

Von Neumann was sitting at an enormous desk, looking more like a prosperous bank president than an academic in his expensive three-piece suit, silk tie, and jaunty pocket handkerchief. He had the preoccupied air of a busy executive. At the time, he was holding a dozen consultancies, “arguing the ear off Robert Oppenheimer” over the development of the H-bomb, and overseeing the construction and programming of two prototype computers. He gestured Nash to sit down. He knew who Nash was, of course, but seemed a bit puzzled by his visit.

He listened carefully, with his head cocked slightly to one side and his fingers tapping. Nash started to describe the proof he had in mind… But before he had gotten out more than a few disjointed sentences, von Neumann interrupted, jumped ahead to the as yet unstated conclusion of Nash’s argument, and said abruptly, “That’s trivial, you know. That’s just a fixed point theorem.”

…[Nash] never approached von Neumann again. Nash later rationalized von Neumann’s reaction as the naturally defensive posture of an established thinker to a younger rival’s idea, a view that may say more about what was in Nash’s mind when he approached von Neumann than about the older man. Nash was certainly conscious that he was implicitly challenging von Neumann. Nash noted in his Nobel autobiography that his ideas “deviated somewhat from the ‘line’ (as if of ‘political party lines’) of von Neumann and Morgenstern’s book”.

A few days after the disastrous meeting with von Neumann, Nash accosted [fellow graduate student] David Gale… Unlike von Neumann, Gale saw Nash’s point… Gale realized that Nash’s idea applied to a far broader class of real-world situations than von Neumann’s notion of zero-sum games. “He had a concept that generalized to disarmament”, Gale said later. But Gale was less entranced by the possible applications of Nash’s idea than its elegance and generality. “The mathematics was so beautiful. It was so right mathematically.”

Gale suggested asking a member of the National Academy of Sciences to submit the proof to the academy’s monthly proceedings. “He was spacey. He would never have thought of doing that,” Gale said recently, “so he gave me his proof and I drafted the NAS note.”… Gale added later, “I certainly knew right away that it was a thesis. I didn’t know it was a Nobel.”

To explain Nash’s theorem, we first need an extension of the setup used above. Suppose and

are

matrices of real numbers. Define mixed strategies for the two players

and

as before. If

plays the mixed strategy

and

plays the mixed strategy

, there are now two expected payoffs: the payoff to

is

and the payoff to

is

. The game is zero-sum if

, or equivalently, the payoff

to

is the negative of the payoff

to

for all

. In this special case we recover von Neumann’s theory by setting

and focusing on the single payoff function

to

.

A Nash equilibrium is a pair with

and

such that

for all

and

for all

. The idea of a Nash equilibrium is that neither player can unilaterally improve his or her payoff by switching to a different mixed strategy.

With this terminology, Nash’s fundamental theorem is:

A Nash equilibrium exists for every (not necessarily zero-sum) matrix game.

Nash’s original proof of this theorem was based on the Kakutani fixed point theorem, a generalization of the more standard Brouwer fixed point theorem which was invented by Kukutani to streamline and clarify von Neumann’s complicated original proof of the minimax theorem. Nash later found an elegant argument based on the Brouwer fixed point theorem itself, which states that if is homeomorphic to a Euclidean ball then any continuous map from

to itself must have a fixed point. (It was not even known before Nash’s paper how to deduce von Neumann’s minimax theorem directly from the Brouwer theorem.) Here is Nash’s elegant “Proof from the Book”:

Proof: Let . Given mixed strategies

, set

for

and

for

.

We claim that is a Nash equilibrium if and only if

and

for all

. One direction is clear from the definition. For the other direction, note that if

for all

then

for all

, which implies by linearity that

for all mixed strategies

, and similarly for

and

.

Now define a continuous map by setting

with

We claim that a pair of mixed strategies is a Nash equilibrium if and only if

is a fixed point of

. Given this, the theorem follows immediately from the Brouwer fixed point theorem since

is homeomorphic to the unit ball in

and thus

must have a fixed point.

One direction of the claim is clear: if is a Nash equilibrium then all

and thus

is a fixed point of

. Conversely, suppose

is a fixed point of

. Since

is a convex combination of the

for which

, we must have

for some

with

. Hence

for some

with

, which implies (using the equality

) that

. Comparing the other components of

and

now shows that

for all

. Similarly, we have

for all

. Thus

is a Nash equilibrium by our earlier claim.

As a final coda to this post, we note that Brouwer fixed point theorem itself is equivalent to a certain theorem proved by John Nash about the game of Hex, which Nash himself invented as an undergraduate. (Hex was invented independently by a Danish man named Piet Hein, who marketed the game with Parker Brothers in the mid-1950’s.) A number of Nash’s fellow grad students recall thinking that Nash spent all of his time at Princeton in the common room playing board games. One morning in the winter of 1949, Nash ran into David Gale and shouted “Gale! I have an example of a game with perfect information. There’s no luck, just pure strategy. I can prove that the first player always wins, but I have no idea what his strategy will be. If the first player loses at this game, it’s because he’s made a mistake, but nobody knows what the perfect strategy is.”

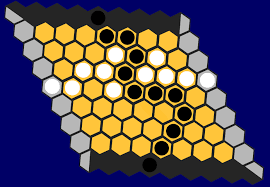

The game board for Hex consists of a rhombus tiled by

consists of a rhombus tiled by hexagons on each side. Two opposite edges of the board are colored black, the others white. The players place turns placing black (resp. white) stones on the hexagons, and once played a piece can never be moved. The black player tries to construct a connected chain of black stones between the two black boundaries, while the white player tries to do the opposite. Nash showed that the game can never end in a draw. This implies, by an idea which has come to be known as “strategy stealing”, that the first player can always win. Indeed, it is a basic fact of game theory that in a finite two-player game where no draws are possible, one of the two players always has a winning strategy. Now suppose, for the sake of contradiction, that the second player has a winning strategy in Hex. Imagine the first player makes an arbitrary move and the second player responds. The first player now puts a stone in the reflected position corresponding to the second player’s move. The game proceeds with the first player always playing the reflection of the second player’s hypothetical winning strategy. If this requires putting a stone where one of her stones already appears, then she plays arbitrarily, which can only further contribute to forming a chain. The second player cannot win in this scenario, a contradiction. Sylvia Nasar writes:

When Gale finally understood what Nash was trying to tell him, he was captivated… The game quickly swept across the common room. It brought Nash many admirers, including the young John Milnor, who was beguiled by its ingenuity and beauty… Nash’s proof [that there can never be a draw, and thus that the first player can always win] is extremely deft, “marvelously non-constructive” in the words of [John] Milnor, who plays it very well. If the board is covered by black and white pieces, there’s always a chain that connects black to black or white to white, but never both. As Gale put it, “You can walk from Mexico to Canada or swim from California to New York, but you can’t do both.”

In his lovely paper “The Game of Hex and the Brouwer Fixed-Point Theorem”, David Gale writes:

The application of mathematics to games of strategy is now represented by a voluminous literature. Recently there has also been some work which goes in the other direction, using known facts about games to obtain mathematical results in other areas. The present paper is in this latter spirit. Our main purpose is to show that a classical result of topology, the celebrated Brouwer Fixed-Point Theorem, is an easy consequence of the fact that Hex, a game which is probably familiar to many mathematicians, cannot end in a draw. This fact is of some practical as well as theoretical interest, for it turns out that the two-player, two-dimensional game of Hex has a natural generalization to a game of n players and n dimensions, and the proof that this game must always have a winner leads to a simple algorithm for finding approximate fixed points of continuous mappings. This latter subject is one of considerable current interest, especially in the area of mathematical economics. This paper has therefore the dual purpose of, first, showing the equivalence of the Hex and Brouwer Theorems and, second, introducing the reader to the subject of fixed-point computations.

I should say that over the years I have heard it asserted in “cocktail conversation” that the Hex and Brouwer Theorems were equivalent, and my colleague John Stallings has shown me an argument which derives the Hex Theorem from familiar topological facts which are equivalent to the Brouwer Theorem. The proof going in the other direction only occurred to me recently, but in view of its simplicity it may well be that others have been aware of it. The generalization to n dimensions may, however, be new.

We encourage the reader to check out Gale’s article, as well as this blog post.

Concluding remarks:

1. Nash also made many other profound contributions to mathematics, including my own field of algebraic geometry; for example, he proved that given any smooth compact -dimensional real manifold

, there is a real algebraic variety

having a connected component

which is diffeomorphic to

. This is the kind of result which no one else had dared to conjecture, or probably even think about, because it appears impossibly strong — and yet Nash proved it. Mike Artin and Barry Mazur used this result in their famous paper on periodic points of dynamical systems.

2. My exposition of von Neumann’s minimax theorem was heavily influenced by Harold W. Kuhn’s book “Lectures on the Theory of Games”.

3. One can prove Nash’s theorem on the game of Hex by elementary means; see for example this paper by Berman or this paper by Huneke. Combined with Gale’s theorem, these arguments give elementary proofs of the Brouwer Fixed-Point theorem. My exposition of the fact that the first player can always win in Hex was adopted from this AMS feature column.

4. For a mathematical analysis of the bar scene where Russell Crowe, as John Nash, explains his notion of equilibrium in the movie “A Beautiful Mind”, see this blog post.

Pingback: The Story of Nash Equilibrium: From Beautiful Mind to Beautiful Math - gametheorytimes.com