Test your intuition: is the following true or false?

Assertion 1: If

is a square matrix over a commutative ring

, the rows of

are linearly independent over

if and only if the columns of

are linearly independent over

.

(All rings in this post will be nonzero commutative rings with identity.)

And how about the following generalization?

Assertion 2: If

is an

matrix over a commutative ring

, the row rank of

(the maximum number of

-linearly independent rows) equals the column rank of

(the maximum number of

-linearly independent columns).

If you want to know the answers, read on…

A criterion for surjectivity of an -linear map

My previous post explored the theory of Fitting ideals. A simple consequence of this theory is the following:

Theorem 1: Let

be an

matrix with entries in a nonzero commutative ring

with identity. The corresponding map

is surjective if and only if

and

.

(Recall that denotes the ideal generated by all

minors of

.)

Proof: Let be the cokernel of

. On the one hand,

is surjective iff

iff

, where the last equivalence follows from the fact that

annihilates

. On the other hand,

is a presentation for

, so

. Q.E.D.

The rest of this post will be about a complementary criterion for deciding when an -linear map

is injective.

McCoy’s theorem

We have the following useful complement to Theorem 1:

Theorem 2 (McCoy): Let

be an

matrix with entries in a nonzero commutative ring

with identity. The corresponding map

is injective if and only if

and the annihilator of

is zero.

(Recall that the annihilator of an -module

is the set of

such that

for all

.)

In particular:

Corollary 1: If

, a system

of

homogeneous linear equations in

variables with coefficients in a commutative ring

has a nonzero solution over

.

If is an integral domain, the corollary follows from the corresponding fact in linear algebra by passing to the field of fractions, but for rings with zero-divisors it’s not at all clear how to reduce such a statement to the case of fields.

Some people (looking at you, Sheldon Axler) like to minimize the role of determinants in linear algebra, but I’m not aware of any method for proving Corollary 1 without using determinants. So while linear algebra over fields can largely be developed in a determinant-free manner, over rings determinants appear to be absolutely essential.

From Corollary 1 we immediately obtain:

Corollary 2: (“Invariance of domain”) then

.

Additional consequences of McCoy’s theorem

Another corollary of McCoy’s theorem is the following, which shows that Assertion 1 at the beginning of this post is true:

Corollary 3: If

is a square matrix over a commutative ring

, then the rows of

are linearly independent over

if and only if the columns of

are linearly independent over

.

Indeed, by applying the theorem to and

, both conditions are equivalent to the assertion that

is not a zero-divisor of

.

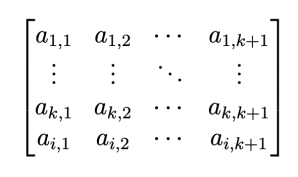

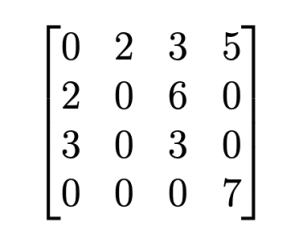

However, Assertion 2 is false: it is not true in general that the row rank of a matrix equals the column rank. For example, the row rank of the matrix over

is 1 but the column rank is 0.

Corollary 4: Suppose

is a finitely generated

-module. Then the maximal size of a linearly independent set in

is at most the minimum size of a generating set of

, with equality if and only if

is free.

Proof: It suffices to prove that if generate

and

are linearly independent then

are linearly independent (i.e., they form a basis for

). Since the

generate, we have

for some

matrix

over

. If

for some non-zero column vector

then

, contradicting the independence of the

. By Theorem 1, it follows that

is not a zero divisor in

.

Consider the localization . Over

, the matrix

has an inverse

. If

for some column vector

, then (with the obvious notation)

. But the

remain independent over

, since we can clear the denominator in any

-linear dependence to get a dependence over

(this is where we use the fact that

is not a zero-divisor). Thus

, hence

. It follows that the

are linearly independent over

as claimed. Q.E.D.

Proof of McCoy’s Theorem

The proof will use the following well-known identities which are valid for any square matrix

over any commutative ring

:

Laplace’s formula: For all ,

, where

is the minor of rank

obtained from

by deleting row

and column

.

Cramer’s formula: There is an matrix

such that

.

Proof of the theorem: The “if” direction is easy: suppose and

with

. Then for every rank

minor

of

, we have

and thus, by Cramer’s formula,

. Since this holds for all

, the hypothesis of the theorem implies that

. Thus

is injective.

For the “only if” direction, suppose is injective. We may assume that

, since once the theorem has been proved for square matrices, if

we can add

zero rows and the resulting

matrix is again injective but has determinant zero, a contradiction.

Let be such that

for every rank

minor

of

. We will prove by backward induction on

that for all

,

for every rank

minor

of

.

By assumption, holds. Assume

holds and let

be a minor of

of rank

.

We may assume, without loss of generality, that is the minor corresponding to the first

rows and first

columns of

. For

, let

be the rank

minor of

obtained by deleting column

from the matrix given by the first

rows and first

columns of

. (So, in particular,

.)

Define

Fix . From the Laplace formula,

where is the matrix

If , the determinant of

is zero because it has two identical rows. If

, the determinant of

is zero by the inductive hypothesis

. Thus

, and since

is injective we conclude that

. In particular,

.

This completes the inductive step. In particular, holds, which means that

and hence

. Since

is injective, it follows that

as desired. Q.E.D.

Coda

Define the determinantal rank of an matrix

with entries in a nonzero commutative ring

with identity to be the largest nonnegative integer

such that there is a nonzero

minor of

.

Motivated by McCoy’s theorem, define the McCoy rank of to be the largest nonnegative integer

such that the annihilator of

is equal to zero.

One can deduce from McCoy’s theorem that both the row rank and column rank of are less than or equal to the McCoy rank of

, which is by definition less than or equal to the determinantal rank of

.

The following matrix over

has row rank, column rank is 2, McCoy rank 3, and determinantal rank 4, showing that all of these inequalities can in general be strict:

Over an integral domain, however, all four notions of rank coincide.

References

McCoy’s theorem was first published in “Remarks on Divisors of Zero” by N. H. McCoy, The American Mathematical Monthly, May, 1942, Vol. 49, No. 5, pp. 286–295.

Our proof of McCoy’s theorem follows the exposition in the paper “An Elementary Proof of the McCoy Theorem” by Zbigniew Blocki, UNIV. IAGEL. ACTA MATH. no. 30 (1993), 215–218.

The McCoy rank of a matrix is discussed in detail in D.G. Northcott’s book “Finite Free Resolutions”.

I found the matrix in Stan Payne’s course notes entitled “A Second Semester of Linear Algebra”.

I’m confused by the example above Corollary 4; the lower right 2×2 minor has determinant 1, so shouldn’t that imply that the row and column ranks are both at least 2?

Oops, there was a typo! The ring should have been , not

, not  . I’ve fixed this now. Does this clear things up? The bottom two rows are linearly dependent, for example, because

. I’ve fixed this now. Does this clear things up? The bottom two rows are linearly dependent, for example, because  . And the determinant of the lower right

. And the determinant of the lower right  minor is 21, which is now a zero-divisor.

minor is 21, which is now a zero-divisor.

Phew! Yes, that totally clears things up.

There’s also something counter-intuitive to me about the fact that under the projection map $\mathbf{Z}/210\mathbf{Z} \rightarrow \mathbf{Z}/10\mathbf{Z}$, the rank actually *increases*. It’s clear why that happens here (the coefficients in the linear combination you wrote down are in the kernel of the projection map, as presumably are any coefficients that would work), but is there some more geometric way to picture that?

I don’t know of a deeper explanation for why base change can increase the rank.

I’m confused by the example above Corollary 4; the lower right 2×2 minor has determinant 1, so shouldn’t that imply that the row and column ranks are both at least 2?

These are some of the apocryphal theorems in algebra that no one ever teaches and that get rediscovered by every generation anew. When I was an undergrad, I found it by trying to generalize the (fairly well-known) square matrix case: https://artofproblemsolving.com/community/c7h124137 . The easier “only if” direction of McCoy’s theorem also appears in Keith Conrad’s https://kconrad.math.uconn.edu/blurbs/linmultialg/extmod.pdf (Theorems 5.10 and 6.4).

Note that an easier counterexample to Assertion 2 is the 1×2-matrix [2 3] over Z/6.

Thanks, Darij. Yes, one of my goals in this blog is to provide expositions of basic results like this which no one ever learns in a course. And thanks for the easier counterexample, I thought I was being clever by providing just one example to illustrate everything in the post but I now see that this was simply lazy, and I’ve updated the post accordingly.

Ah, that’s why! I somehow didn’t notice its second purpose.

Thanks for sharing very helpful