I’m teaching Graduate Algebra this semester, and I wanted to record here the proof I gave in class of the (existence part of the) structure theorem for finitely generated modules over a PID. It’s a standard argument, based on the existence of the Smith Normal Form for a matrix with entries in a PID, but it’s surprisingly hard to find a concise and accessible reference.

We assume familiarity with basic definitions in the theory of modules over a (commutative) ring. Our goal is to prove the following:

Theorem: Let

be a principal ideal domain and let

be a finitely generated

-module. Then

is isomorphic to a direct sum of cyclic

-modules. More precisely, there are a non-negative integer

(called the rank of

) and elements

(called the invariant factors of

) such that

for all

and

.

Remark: The rank of is uniquely determined, and the invariant factors of

are uniquely determined up to multiplication by a unit. There are many references for this uniqueness assertion, see for example Section 12.1 in Dummit and Foote’s “Abstract Algebra”. Alternatively, see my next blog post on Fitting ideals for a proof of a more general assertion.

The proof of the theorem will use the following two results, which will be established later:

Proposition 1: Let

be a PID and let

be a finitely generated free

-module of rank

(i.e.,

). Then any

-submodule of

is free of rank at most

.

Proposition 2: Let

be a PID, let

be nonnegative integers, and let

be an

matrix with entries in

. Then there exist an invertible

matrix

and an invertible

matrix

, both with coefficients in

, such that

is in Smith Normal Form. (This means that the last

rows of

are zero, and the square submatrix given by the first

rows is diagonal, with diagonal entries (from top left to bottom right)

such that

for all

. By abuse of terminology, we call

the invariant factors of

.)

Assuming these two results, we show how to prove the main theorem.

Proof of the Theorem: Suppose is generated by

. Then the map

given by

is a surjective $R$-module homomorphism, hence (by the first isomorphism theorem)

for some

-submodule

of

. By Proposition 1,

is free of rank

, i.e.,

.

Let be an ordered basis for

and let

be an ordered basis for

. Write

with

. Let

be the Smith Normal Form of

, and let

be the invariant factors of

. Then there exist ordered bases

for

and

for

(corresponding to the change-of-basis matrices

and

, respectively) such that

for

(exercise).

In other words, we have

But this latter quotient module is easily verified to be isomorphic to Q.E.D.

Proof of Proposition 1: Without loss of generality we may assume that . The proof is by induction on

.

The case is true because then

and

is an ideal in

, which is principal since

is a PID. Thus

for some

. If

then

is free of rank zero, and if

then the map

given by

is an

-module isomorphism (since

is a domain), so

is free of rank 1.

For the inductive step, let be the

-module homomorphism given by projection onto the last coordinate. Then

is isomorphic to

. If

is a submodule of

, we define

. By induction,

is free of rank at most

.

In addition, is an ideal of

, hence

for some

. Choose

with

and let

. If

then

and we’re done. So we may assume, without loss of generality, that

.

Claim: .

Given the claim, it follows (since is free of rank at most

and

is free of rank 1) that

is free of rank at most

, which proves the theorem.

To prove the claim, it suffices to show that (a) and (b)

. For (a), let

and choose

such that

. Then

, hence

. Thus

with

and

, proving (a). For (b), apply

to some element

. Since

we have

. On the other hand, since

we have

for some

, and then

. Thus

, which implies (since

is a domain) that

, and thus

. Q.E.D.

Proof of Proposition 2: Recalling that every PID is a UFD, define the “length” function by setting

and

iff

with

and

non-zero prime (= irreducible) elements of

. In particular,

iff

is a unit. It is easy to see, by unique factorization, that for all

we have:

(1) If then

.

(2) If and

then

and

are associate, i.e., they differ by multiplication by some unit.

We say that two matrices

with entries in

are equivalent if there exist

as above with

.

We proceed by induction on . The case

is trivial, and we may assume without loss of generality that

. Let

be a matrix equivalent to

such that

is minimal among all matrices equivalent to

.

If we swap two rows, or two columns, of any matrix we obtain an equivalent matrix (exercise). It follows easily that for all

.

Claim 1: for all

.

By swapping columns as needed, it suffices to prove that . Let

be a generator for the ideal generated by

and

. Then there exist

such that

. If

and

with

then

.

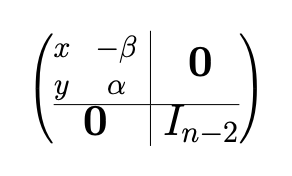

Define , where

is the following matrix:

(Note that is invertible, since its determinant is

.)

Then is equivalent to

and

. By the definition of

, we must have

. But also

, so that

by (1) above. So

, hence by (2)

and

are associate. But then

as desired, proving Claim 1.

By a similar argument using left-multiplication by a suitable matrix

instead of right-multiplication by

, we obtain

for all

(exercise).

By performing elementary row and column operations (which yield equivalent matrices), we arrive at a matrix equivalent to

which has all entries in the first row and first column equal to zero, except for the upper-left entry which is equal to

.

Let be the

matrix obtained from

by removing the first row and first column. By induction on

,

has a Smith Normal Form with diagonal entries

such that

for all

.

Claim 2: .

To see this, add the second row of to the first row. The resulting matrix is equivalent to

and its first two entries are

and

. Applying the proof of Claim 1 (and recalling that

) gives

, proving Claim 2.

Proposition 2 now follows, since Claim 2 implies that the matrix given by replacing the block

inside

with its Smith Normal Form is itself in Smith Normal Form. Q.E.D.

Concluding remarks

(1) One can prove the main theorem without Proposition 1 by first showing instead that if is a noetherian ring (e.g., a PID) then any submodule of a finitely generated module is finitely presented. This implies that for any finitely generated

-module

, there is an

matrix

with coefficients in

such that

is isomorphic to

. The rest of the proof now proceeds more or less as above, with the only differences being that the matrix

no longer necessarily has rank

and we don’t know that

(but these facts are not really crucial in the above argument).

(2) If is a Euclidean domain (which implies that

is a PID, but not conversely), we can get any

matrix

as above into Smith Normal Form using just elementary row and column operations. To see this, imitate the proof above but use the Euclidean norm

in place of the length function

. In our proof of Claim 1, we had to right-multiply by

, which doesn’t correspond in general to an elementary column operation. However, if

is Euclidean then we can instead write

with

or

. If we add

times column 1 of

to column 2 and then swap columns 1 and 2, we obtain a matrix equivalent to

whose upper-left entry is

. This contradicts the choice of

unless

, which means that

as claimed. (An example of a PID which is not a Euclidean domain is

, see e.g. this paper.) Note that this proof can easily be turned into an algorithm for computing the Smith Normal Form of a matrix over a Euclidean domain.

(3) The idea behind the proof of uniqueness of the rank and invariant factors given in Dummit and Foote is as follows. The rank of can be recovered as the maximal number of

-linearly independent elements of

. By the Chinese Remainder Theorem, if

in

with the

distinct nonzero prime elements, then

Thus we can write

as a direct sum of

and some number of cyclic

-modules, each isomorphic to

for some

and some

. The nonzero prime-powers

which occur are called the elementary divisors of

; they are defined only up to associates. One can recover the invariant factors from the elementary divisors (exercise), so it suffices to prove that the elementary divisors are unique up to reordering and multiplying by units. For this, one shows that if

is a nonzero prime element, we have

, where

is the number of elementary divisors

with

. It follows easily that for each

and

, the number of elementary divisors of the form

is uniquely determined by

.

For different proof, which works directly with the invariant factors without first converting to elementary divisors, see my next blog post on Fitting ideals.

(4) An integral domain is called a Bézout domain if every finitely generated ideal is principal. It is an open question whether every matrix over a Bézout domain

is equivalent to a matrix in Smith Normal Form. (The ring of all algebraic integers is an example of a Bézout domain which is not a PID.)

(5) Two matrices

and

over a field

are similar over

if and only if the characteristic matrices

and

have the same Smith Normal Form over

. This is a well-known consequence of the structure theorem for finitely generated modules over a PID. Note that the minimal polynomial of

is equal to the largest invariant factor of

and the characteristic polynomial is the product of all the invariant factors of

.

(6) Richard Stanley has written this survey paper about applications of the Smith Normal Form to combinatorics, one of which concerns the Jacobian group (a.k.a. the critical group) of a graph.

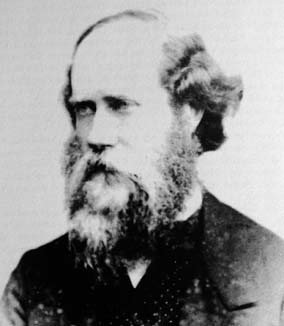

(7) The Smith Normal Form is named for Henry John Stephen Smith (1826-1883), an Irish mathematician who also discovered a version of the Cantor set before Cantor did.

Dear Matt I agree that it’s hard to find a convenient reference for this. I spent a lot of time writing something for my â(Mostly) commutative algebraâ book that I would myself find reasonable. At the end, I gave many proofs… Best Antoine

⣖ Université de Paris â Diderot Institut de mathématiques de Jussieu Paris Rive Gauche Bâtiment Sophie Germain, 8 place Aurélie Nemours, 75205 Paris cedex 13

Envoyé depuis mon téléphone portable, pardonnez mon éventuelle brièvetéâ

Hi Antoine – Thanks for the comment! Believe it or not, despite the fact that you’re one of my favorite mathematical expositors I had never looked at your “(Mostly) commutative algebra” book until this morning. Looks terrific, thanks for the reference. Cheers, Matt

Pingback: Fitting ideals of modules | Matt Baker's Math Blog